Summary statistics for bivariate data

STAT1003 – Statistical Techniques

Dr. Emi Tanaka

Australian National University

These slides are best viewed on a modern browser like Google Chrome on a desktop or laptop. Some interactive components may require some time to fully load.

Bivariate data

Bivariate data involves two different variables (\(x\) and \(y\)) for each observation.

- Suppose \(x_i\) and \(y_i\) are two variables measured on the same unit \(i\) for \(i = 1, 2, \ldots, n\).

- It allows us to explore relationships and associations between the two variables.

- Examples:

- Height and weight of individuals.

- Temperature and ice cream sales.

- Study time and exam scores.

- Eye color and hair color.

| Categorical | Numerical | |

|---|---|---|

| Categorical |

|

|

| Numerical |

|

|

Contingency table

A contingency table (also known as a cross-tabulation or crosstab) display the frequency distribution of two or more categorical variables.

What do you notice between these two approaches?

Stacked barplot

A stacked barplot is used to compare the composition of different groups in a dataset, especially contribution of sub-categories to the total within each main category.

Percent stacked barplot

A percent stacked barplot is ideal for comparing the relative frequencies of subgroups within categories, rather than their absolute counts.

Side-by-side barplot

A side-by-side barplot (also called a grouped barplot) is used to visually compare the values of different subgroups across categories.

Group summary statistics

For a bivariate data where one variable is numerical and the other is categorical, you can use summary statistics for univariate data for each group.

- For example, numerical statistics for each group can be computed as:

Graphical statistics by group

- Likewise, we can compute graphical statistics for each group.

Beeswarm plot is a type of scatterplot that shows the distribution of data points while avoiding overlap, making it easier to visualize the density and spread of the data.

Case study 🌾 Wheat seed morphological characteristics

A dataset was collected to investigate morphological characteristics associated with seed weight in a line of diploid wheat (Triticum monococcum).

DSeed- identifier for each seedWeight- weight of seed (mg)Length- length of seed (mm)Diameter- diameter of seed (mm)Moisture- mositure content of seed (as a percentage)Hardness- endosperm hardness

Scatterplot

A scatterplot is a graphical representation that displays the relationship between two numerical variables by plotting individual data points on a two-dimensional graph.

Sample covariance

Sample covariance is a measure of how much two numerical variables change together.

\[ s_{xy}=\frac{1}{n-1} \sum_{i=1}^{n}\left(x_{i}-\bar{x}\right)\left(y_{i}-\bar{y}\right) \]

- Interpretation:

- When \(s_{xy} > 0\), the variables tend to increase together.

- When \(s_{xy} < 0\), one variable tends to increase when the other decreases.

- When \(s_{xy} = 0\), there is no linear relationship between the variables.

Consider the following dataset with two variables, \(x\) and \(y\):

| \(i\) | \(x\) | \(y\) |

|---|---|---|

| 1 | 1 | 10 |

| 2 | 2 | 70 |

| 3 | 3 | 100 |

\[s_{xy} = \frac{1}{2}\left[(1-2)(10-60)+(2-2)(70-60)+(3-2)(100-60)\right]=45\]

- But the magnitude of covariance is not easy to interpret since it depends on the units of the variables.

Pearson’s correlation coefficient

- Correlation coefficient is a normalised version of covariance.

- The sample Pearson correlation coefficient, denoted as \(r\), is a measure of the strength of a linear relationship between two variables (\(x\) and \(y\)).

\[r = \frac{\sum_{i=1}^n(x_i-\bar{x})(y_i - \bar{y})}{\sqrt{\sum_{i=1}^n(x_i - \bar{x})^2\sum_{i=1}^n(y_i - \bar{y})^2}}\]

- The correlation coefficient ranges from -1 to 1.

Interpretation of correlation coefficient

- The sign of the correlation coefficient indicates the direction of the relationship.

- The magnitude indicates the strength of the linear relationship.

| \(|r|\) | Interpretation |

|---|---|

| 0.8 - 1.0 | Very strong association |

| 0.6 - 0.8 | Strong association |

| 0.4 - 0.6 | Moderate association |

| 0.2 - 0.4 | Weak association |

| 0.0 - 0.2 | Very weak association |

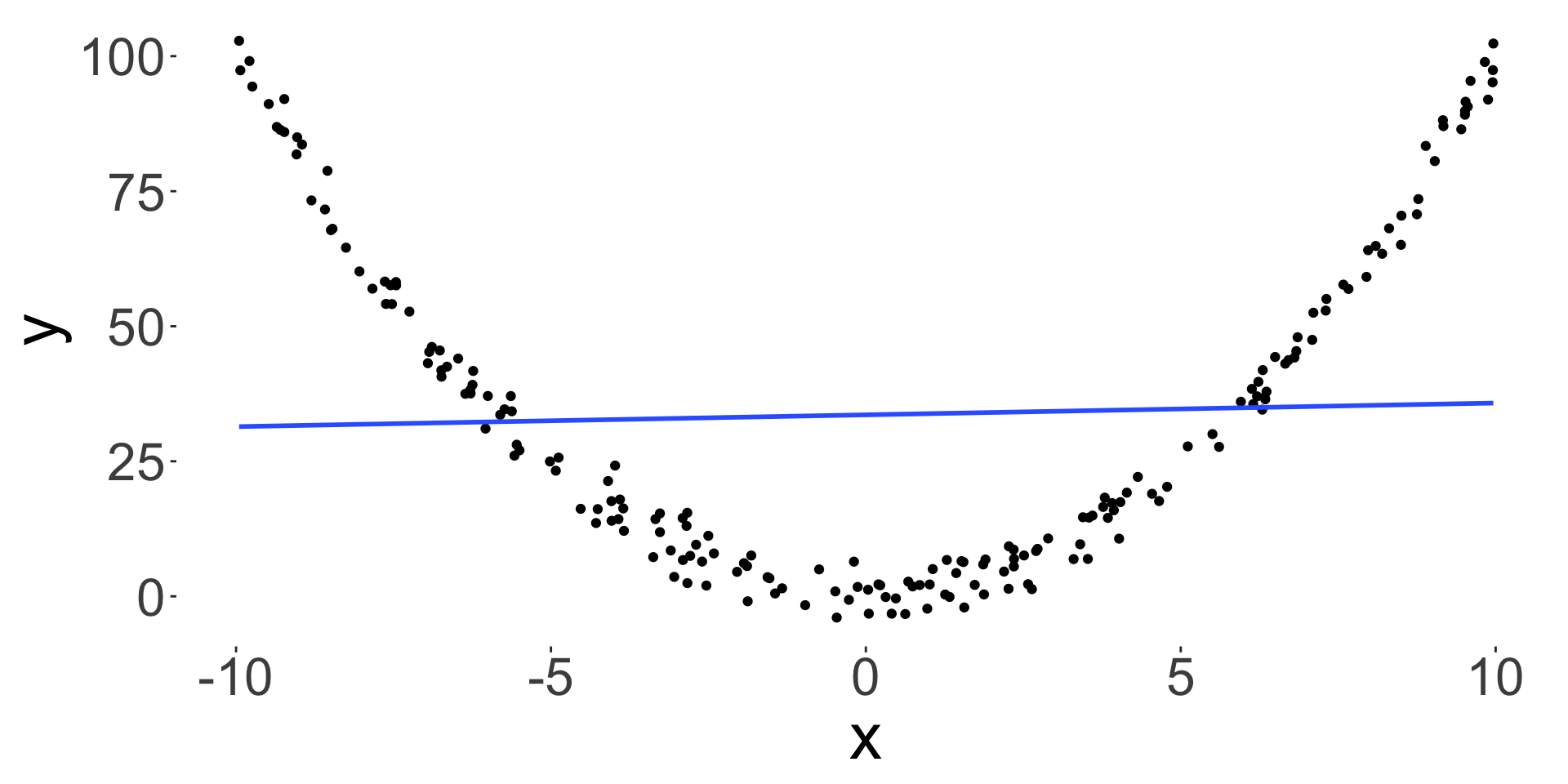

Wrong interpretation of correlation coefficient

Source: xkcd

Just because \(x\) and \(y\) are highly correlated, it does not mean that \(x\) causes \(y\) or vice versa – correlation is not causation!

- Number of ice cream sales and the rate of drowning deaths.

- It is also easy to get spurious correlation if computing many pairwise correlations.

- Correlation also only measures a linear relationship, so low correlation doesn’t mean that there is no relationship.

\[r = -0.0753677\]

Summary statistics can be misleading

- You can have a bivariate dataset with the exact same:

- marginal mean,

- marginal variance and

- correlation, but the relationship between the two variables can be very different.

- Always plot your data!

Summary

| Categorical | Numerical | |

|---|---|---|

| Categorical |

|

|

| Numerical |

|

|

- Correlation \(\neq\) Causation

- Always plot the data!

STAT1003 – Statistical Techniques